BLOG

—

Subscribing to the Boundless Future of GIS

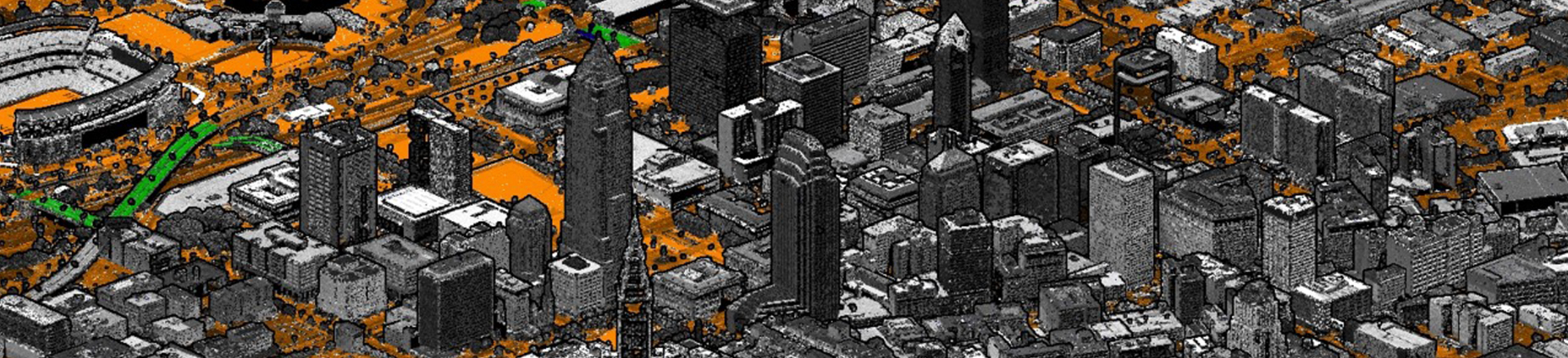

Data is at the heart of geographic information systems (GIS), because getting answers to relevant business questions depends first and foremost on having the right data, at the right time, for the right use. Esri labels this as a “Science of Where” concern, while the open source geospatial community sees it similarly as a dual question of publishing and analysis/processing. Either way, it’s clear that geospatial data is at the heart of many business decisions today. And, as the speed and volume of data continue to grow, understanding the scope and evolution of GIS is increasingly vital to the future of its applications.

Evolution of GIS Data and Systems

GIS has its roots in cartography and survey. A notable example from 1854 was John Snow’s map of a cholera outbreak in London, in which the transmission of the infection was tracked and mapped to identify and mitigate its spread. And, as early as the 1700s, surveyors created programs such as the Public Land Survey System (PLSS) to establish a grid-based system for its topographic maps, which are still used in digital form today. Over time, GIS evolved to incorporate the more sophisticated concepts and applications provided by computer technology and data.

In the 1980s and 1990s, the “canonical” GIS data, or data in their simplest form, in a typical county auditor’s office consisted of distorted aerial imagery that was manipulated to match up with an existing county map. Information associated with the individual applications of the county—such as property taxes, parcel dimensions, floodplain mapping and school district boundaries—lived in separate databases that could not be combined, compared and integrated like they are today.

Today, county auditors utilize GIS data to offer enhanced property search features online, which enable the public to not only view accurate aerial images of homes and neighborhoods, but access layers of other data that are integrated with the locations.

More Data, Faster

It’s not just the quality of data that has changed. During my 32 years with Woolpert, I’ve seen this progression firsthand as technological advancements have dramatically increased the ability to collect and process data, while decreasing associated costs.

When I started in 1988, the cost of delivering a hard copy orthorectified aerial imagery and digital tax map for a single, highly populated county in Ohio was more than $10 million. Fast forward to this year, when Woolpert is gathering and processing high-resolution imagery for the entire state of Ohio for less than $2 million. This is possible due to technical advancements in imagery and data acquisition, like better sensors, smarter algorithms, and more efficient networks and hardware, etc.

But higher-resolution imagery and data makes for an interesting and recurring challenge: dealing with the sheer size of the data. For example, the shift from 6-inch resolution imagery to 3-inch resolution with the same image dimensions in pixels alone accounts for a massive increase in data size in bytes.

Bigger Data, More Often

Geospatial data has always been relatively big. In the early 1990s, sharing a 2 gigabyte (GB) digital imagery file over the internet was a real challenge. Sure, FTP was a thing and we used it extensively, but for a client to download an entire library of those 2GB images was prohibitively slow, given dial-up modem connections at worst or a T1 line at the very best. But it’s a recurring theme and not a point-in-time problem as big (geospatial) data keeps getting bigger, and we get more of that data more frequently. Goodbye gigabytes, hello tera- and petabytes!

This all sounds like a problem. More data, more pixels, more frequently. Why are companies like Woolpert doing this? It’s because public and private sector organizations know that these data are Step One in a process of analysis and insight.

Data Driven

Of course, data is an enabler. Nobody (we hope!) pays for original data or derivative data products because they want data. People want the answers that data can provide. Here’s an example:

The U.S. Geological Survey’s 3D Elevation Program (3DEP) program is really big geospatial data. Using our home state of Ohio as our continued reference here, many agencies like the Ohio Division of Natural Resources, the Ohio Department of Transportation and the Ohio Environmental Protection Agency are using 3DEP data and imagery in GIS formats to locate terrestrial features such as abandoned oil wells, manage roadway assets, detect impacts of the environment and so much more. That’s a lot of derivative value from one dataset.

The 3DEP example is really interesting to me because it points to a fundamental shift in how Woolpert and our clients think about the business model behind geospatial data, and thus GIS in general. Since 3DEP data is co-owned by the state and the federal government, and it is made available to the public for analysis in the cloud.

It eliminates the need for one entity to shoulder the cost of aerial acquisition and data storage. As more agencies and governments see the value of accurate, updated geospatial data, the cost to acquire this information can then be shared. Users can subscribe to a service to continually receive the latest collections. This not only eliminates the cost of duplicate efforts but ensures the data will be seamless across agencies. It is a step toward a subscription-based data service model versus point-in-time collection and ownership.

Add to that the fact that the data is being shared multiple times and you get a great—even exponential—return on that initial investment.

Old and New Problems on Our GIS Journey

This sounds great. Lots of data available for a wide spectrum of analytical uses. It also sounds like a headache! Instead of wondering how to deliver a 2GB image across a dial-up line, now we’re talking the same language as the big data people: collecting, structuring, analyzing and distributing on a grand scale.

That’s great if you’re a Google or an Amazon or a Facebook. But if you manage a modest GIS program and are struggling to take the next step, it can feel like you’re swimming in a lake of data without a flotation device. These advances don’t eliminate questions, but they do change what you might want to ask, like: “How do I modernize our GIS?” or “How can GIS serve my needs?” or “Do I need to get on the cloud?”

Approach to Modernization

Because Woolpert was there, working hand in hand with our clients 20 years ago during initial enterprise GIS implementations, and because we’re still here, 20 years later, we are familiar with the modernization of GIS infrastructure. We approach modernization as an individualized effort to support organizations from initial conceptualization through the implementation of modernized GIS and related workflows. Modernized GIS helps organizations manage expenditures better, improve cyber security and reduce overall IT risks by getting vital data more quickly into the hands of the people who need it. We are here to help.

Geospatial Cloud Series 2020

July: Partnerships Expand Woolpert's Geospatial Footprint, Capabilities

August: Evaluating Geospatial SaaS Platforms to Meet Business Needs

September: Remote Sensing Data at Home in the Cloud

October-November: With Great Power of the Public Cloud Comes Even Greater Responsibility

December: Series in Review: Where the Cloud Intersects with Geospatial

Share this Post